- Artificial intelligence programs for camera trap image recognition have become quite good at identifying common wildlife, but they struggle with rare animals.

- Before AI can tell a badger from a raccoon, it needs to be trained with some images, but if a species is rarely seen in camera trap photos, there isn’t enough data for it to learn, and it won’t be very good at recognizing that rare species (‘rare-class categorization’).

- However, a new commentary explains that AI are able to learn from the kind of game engine-generated, hyper-realistic animal images that feature in today’s highly advanced digital games.

- This post is a commentary. The views expressed are those of the author, not necessarily of Mongabay.

A few years ago I was catching butterflies in a strip of rainforest in Panama. One day the camp welcomed a team of jet-lagged vagabonds dragging their rollers full of flashlights and 3D scanners. I thought they were a film crew, but if so they didn’t seem to have much of a script: they photographed and scanned whatever came their way—leaves, dead leaves, bugs, dead bugs. It turned out they were developers from a well-known game studio, here deep in the Cordillera to capture details of natural objects for their next big open world game. It wasn’t ironic that even in a fantasy world, the developers wanted the virtual flora and fauna to be as realistic as possible. Player immersion was the key—one of the developers’ name cards had “smell the grass” printed in stylized font.

A tenet of the modern conservation movement is the intrinsic value of biodiversity, but out there in the jungle, I thought, was a rakish horde of digital nomads who cherished biodiversity for a very alien reason: virtual world immersion. Over the years, their effort to digitally preserve our biosphere was facilitated by advances in game engine rendering capability and has enlivened cyberspace and gaming consoles: bringing players the thrill of elk-hunting in the 19th century Rocky Mountains or that of horse-riding in a post-apocalyptic Pacific temperate forest. Increasingly I have also observed that tools used by game developers are helping real-world conservationists.

Conservation practitioners don’t stare at gaming consoles; they tinker with camera traps—lots of them. A standard mammal survey employs hundreds of motion-triggered camera traps and gathers hundreds of thousands of photos. To make these data informative, the animal in each photo needs to be identified, and this can be a time-consuming process: photos feature a menagerie of raccoons nosing the camera, birds in flight, skunks breaking wind in self-defense, and most often, empty false-positives. Fortunately, most image identification in camera trap surveys is done by artificial intelligence (AI). But before AI can tell a badger from a raccoon, it needs to be trained with some annotated images.

Here’s the catch: if a species of animal is rarely seen in camera trap photos, there isn’t enough training data for the AI to learn about it; consequently, the AI won’t be very good at recognizing that particular rare species. In machine learning jargon, this is the problem of rare-class categorization, as Sara Beery et al write, “[C]urrent computer vision systems struggle to categorize objects they have seen only rarely during training, and collecting a sufficient number of training examples of rare events is often challenging and expensive.”

Endangered animals, by definition, are rare classes: they are exciting and precious sightings not adequately represented in AI training datasets. As a result, current camera trap image recognition AI is good at calling out raccoons from just a tail in the corner of a photo, but can’t pick out an endangered kit fox staring right into the camera.

This can be self-defeating: some surveys aim to detect endangered animals, but our methods are biased against recognizing them, unless there’s a way to generate more images of endangered animals in AI’s training dataset?

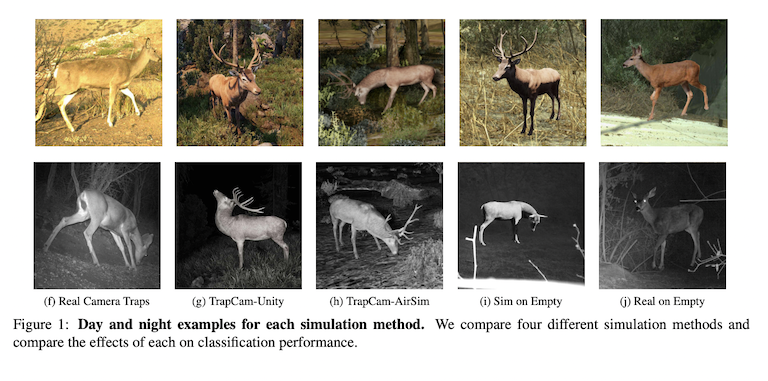

That’s where digital animal models in gaming environments come in. Turns out, AI doesn’t fuss about learning from game engine-generated, hyper-realistic animal images. For example: a colleague of mine wanted to increase her AI’s ability to detect deer in camera trap images captured across the southwestern United States (deer, along with badgers and foxes, are rare sightings, while raccoons are most common).

Her team simulated a forest environment in Unity (a widely used game engine) and loaded 17 digital deer models created by other game developers. They took thousands of “photos” of digital deer roaming the digital forest, and used these images to train their AI. Feeding the AI images of these “synthetic deer” increased its deer detection rate by 40% —that is a huge win for finding more deer, at the minimum cost of using pre-developed environments and pre-modeled deer.

It’s more than about detecting deer; it’s about equal representation. We often hear about how AI is drastically changing the landscape of conservation biology, but conservationists have these synthetic beasts and their virtual habitats to thank for making sure our AI is not ignoring the rarely seen and the underrepresented: the dipteran pollinators, the cave-dwelling bats, the crawlers and creepers—they matter in their intrinsic biodiversity value. While more animals and even entire biospheres are being rendered by a community of talented game developers at shocking realistic resolution, future applications range from cameras that record flower-visiting insects to web-scrapers that detect promotional images of the illegal wildlife trade. We can use game engines to write textbooks for AI to read.

What I find most satisfying in this development is the positive feedback loop of what happens when a group of humans “smell the grass.” Remember, hyper-realistic models of nature exist because game developers dragged their 3D scanners from deserts to rainforests. Their creation is not just nature-inspired, but nature-endowed. Today, in an unexpected quid pro quo, they have found a way to help protect she who gives.

I conclude with a confession: dangling too much with my conservationist hat in that rainforest, I once thought game developers were geeks who prefer fantasy to solving real life problems—while I did ‘work that matters.’ I couldn’t be more short-sighted. Gaming is a $170 billion dollar industry – larger than music, movies and sports combined – more importantly, it embodies the zeitgeist of our youth. I talk big about outreach and teaching, but maybe I should be asking: what more can conservationists learn from gaming?

Zhengyang Wang is a conservation biologist who uses emerging technologies in molecular ecology and remote sensing to monitor insects across the landscape.

See related coverage here at Mongabay:

New app transforms data gathering for wildlife in Papua New Guinea